The frustration of misinformation: Science’s battle to engage with the disinterested

We all know better than to air our disagreements online with people-from-school-who-we-don’t-really-know; and yet, I recently made the mistake of doing just that. Someone I went to school with shared a post claiming that epidural injections are “one of the worst and most dangerous injection[s] in the world” and that it commonly leads to suffering and chronic back pain in later life, along with my former colleague making the comment “and some girls actually want this?”

I almost scrolled past before stopping and realising – I’m a public health researcher and a science communicator, so isn’t this a perfect opportunity to reach out to someone in my own network and try to present another viewpoint, especially when this post could scare people off from a valid pain relief option in childbirth? To be clear, epidurals can lead to complications (as with any medical intervention), and may not always be completely effective, but the language of the post made it clear that there was a larger distrust of medical opinion at play here rather than a genuine attempt at educating people on the rare complications of the treatment. My gripe was mostly with the way information was being shared and the use of an inaccurate source.

Despite efforts to prevent offence, I ultimately failed to get it right and the post was a complete miss with my old classmate. But it got me thinking: how do we successfully engage with people who have already made up their minds on a topic? And particularly, how do we encourage people to choose reliable sources when sharing news or information online? If you (like me) have cheaped out by not paying for premium Spotify, you may have heard the UK Government ads about this. The advert is voiced by “a virus” who is pleased about how viral misinformation has allowed them to spread and, while a little cringey, it is positive that this issue is being recognised.

But it is concerning that we’ve reached such a state of viral misinformation that real, life-endangering consequences are starting to rear their head (it was recently reported that the UK has lost it’s “measles-free” status following a fall in vaccine uptake1). It’s important to consider how we got here, and what we can do to rail against it.

Why Misinformation Keeps Winning

The spread of misinformation online continues to have negative consequences, most notably evidenced in recent reports on global trust in vaccines2. France has particularly low levels of trust in vaccines, with one in three people believing they are unsafe, along with Japan, Turkey, and the former USSR countries of Ukraine and Belarus also polling low on trust. One possible explanation could be previous let-downs by medical and scientific establishments in these countries (Chernobyl springs to mind). In France, a blood transfusion scandal in the 1990s, followed by a recalled hepatitis B vaccine, and later mistakes in over-purchasing H1N1 vaccines at great cost to the French government may have altered public perception, breaking down trust as a result3.

And, as you may have guessed, social media has a considerable influence here too. Particularly because social media algorithms tend to promote content that they expect the user to agree with and hence engage with (or ‘like’), reinforcing beliefs already held. This is not just relevant to medical information either; there is evidence that this had a role in the Brexit referendum as well4.

These networks make it very easy for the source of misinformation to quickly get lost, and it becomes cited as fact. A report in the London Student5 gave a brilliant account of how an incorrect stat about youth turnout during the 2017 general election, once tweeted by a few public figures, suddenly started being quoted by multiple news sites as fact. And similarly, during the 2015-16 Zika Virus outbreak in South America, attempts by the World Health Organisation to share information on reducing risk of exposure were drowned out by rumours. The false claims shared included a theory that the effects of the virus were really caused by pesticides, and that the outbreak was part of a “conspiracy against the public”6. A follow-up study found that rumours were shared three times more often than accurate information7.

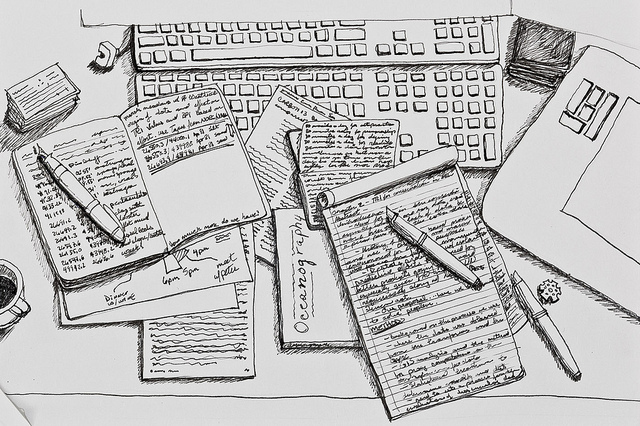

Access to quality journalism may be another issue. New Scientist and The Economist have paywalls – understandably, as exceptional journalism doesn’t usually come for free (unless you are a loyal reader of high-quality student media). The Guardian is one of the few UK broadsheets that doesn’t use a paywall but rather asks its readers for donations. And, while this is one way to make journalism more accessible, it is financially risky — in May this year, they celebrated breaking even.

But there are some great, accurate and free sources of science news out there. Mosaic8, funded by The Wellcome Trust, publishes precise and well-researched news of biology, medicine, and health technology. All of their articles can be republished for free elsewhere due to their commitment to disseminating factual information to a wide audience. The Conversation9 is another not-for-profit endeavour which works directly with academics so that they can report on their own research. It covers topics ranging from art, culture, economics, science, and the UK version even has an entire section dedicated to Brexit. However, this too can be imperfect, due to the conflict of interest when reporting on your own work. Academics are used to talking up their own research, especially as a huge aspect of the job involves competing for grants and funding. There is a risk that journalistic scrutiny is missed when academics are left to report on their own research in this way.

How to Inform Friends and Engage With People

Public engagement has to be a two-way street. Simply telling someone “vaccines are safe and if you think otherwise you are stupid and dangerous” is quite obviously not the most productive way to have this discussion; nor is it enough to shower people with the correct information if they are not receptive to hearing it. The BBC tried a different way of tackling this, by inviting people who were unsure of vaccines to present their questions to doctors who engaged with them and answered their queries10. In France, doctors have made use of online platforms to directly discuss worries with people who are unsure about vaccination. Asking people about their concerns and acknowledging them could be a much more valuable way of having this conversation and shifting attitudes. This approach may backfire, however, as some studies have found that arming people with information strengthens their idea that they know “more than the experts” 11on a given topic. Blundering into a dialogue may do more harm than good.

Involving the public in healthcare decisions can also have a role in improving trust. In Scotland, if you wish to carry out research using NHS records, you first need to make an application to a Public Benefit and Privacy panel. This panel includes patient representatives and, along with NHS board members, information governance experts and data controllers, they help to judge whether or not this research could have substantial value for the public – essentially deciding if it is a worthwhile use of the data. NHS data can be hugely informative for public health but must be managed correctly because, while it is anonymised, it still relates to members of the general public. They deserve a say in how and why their information is used, and these panels help to build trust between patients and the medical research community.

Ultimately though, the media still has a significant role to play. Sensationalist headlines give people a false idea of how research works and how long it really takes to get from ‘hypothesis’ to ‘finding’. When it comes to medical news, a good rule of thumb is to treat any headline with the word “cure” in it with a healthy degree of scepticism. With the number of headlines claiming that a “cure for cancer has been found”, it’s no wonder that some misguided people believe a cure exists but is being hidden so pharmaceutical companies can continue profiting from chronic disease.

One attempt at redressing the balance of expectation is being made by the Twitter account @justsaysinmice12. They retweet headlines about mouse studies which fail to highlight the fact that the results have not yet been replicated in humans. It’s an important distinction; any findings involving mouse models have to go through further extensive checks before ever being relevant to the clinic.

Journalists and the editorial teams behind the headlines are not the only ones to bear that responsibility. The way university press teams report on findings can have an important impact on the accuracy of a story once it’s picked up by the media13. This is important, as many news outlets will only have the press release to go on when writing a story about new research. If the press release is inaccurate, how can the journalists reporting it get the story straight? And all of us at home have a responsibility to only share information that we have read and thought about, rather than simply retweeting something just because it sounds like it might line up with our beliefs from a quick glance at the headline.

Next time you see someone sharing questionable science ‘facts’, challenge yourself to engage in, just that, an engaging way. Don’t be condescending, avoid causing offence, and just get them talking. And if you can do that successfully, please, drop me some tips.

This article was specialist edited by Gabriela De Sousa and copy-edited by Dzachary Zainudden.

References

- https://www.bbc.co.uk/news/health-49394170

- https://www.nature.com/articles/d41586-019-01937-6

- https://mosaicscience.com/story/how-france-persuading-its-citizens-get-vaccinated-measles-antivax-vaccines-vaccination/

- https://www.ted.com/talks/carole_cadwalladr_facebook_s_role_in_brexit_and_the_threat_to_democracy

- http://londonstudent.coop/malia-bouattia-launch-viral-fake-news-story/

- https://www.tandfonline.com/doi/full/10.1080/19325037.2018.1473178

- See previous reference

- https://mosaicscience.com/

- https://theconversation.com/uk

- https://www.bbc.co.uk/news/health-47787908

- https://www.nytimes.com/2019/07/22/upshot/health-facts-importance-persuasion.html

- https://twitter.com/justsaysinmice

- https://digest.bps.org.uk/2019/06/17/psychologists-show-its-possible-to-fix-misleading-press-releases-without-harming-their-news-value/