Shark Attacks, Ice Creams, and the Randomised Trial

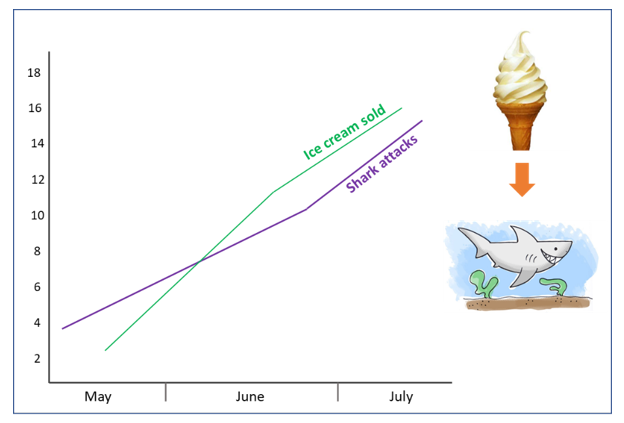

When ice cream consumption rises in summer, so does the number of shark attacks on the coastal beaches of Australia 1. Perhaps this sheds intriguing light on the effects of diet on humans’ appeal to sharks. Could it be that the high fat content of ice cream is a precursor to weight gain, which could make you more attractive to sharks, increasing your odds of being attacked? Or that with their highly developed sense of smell and sensitive electrical receptors, the sharks are able to sense recently eaten ice cream in the stomach? It seems as though junk food can potentially shorten your lifespan in more obscure ways than you thought.

Credit: Sara Cameron

Of course, this seems absurd, and I’ll bet your immediate response is ‘no no no, hot days make people visit the beach’. At the beach, you are more likely to both buy an ice cream and to get in the sea and be attacked by a shark. The correlation between these two variables can actually be explained by hot, sunny days. Hot days cause both these things. But there is a problem. Just because two factors are correlated does not necessarily mean one is directly causing the other to change. Correlation does not imply causation. So why is it that we can find reason in inferring causation for one case, but not in the other? We are instinctively drawn to ‘plausible mechanisms’, rational explanations to tie together observations, based on our understanding of the factors and behaviours involved. We can tell ourselves a believable story about how increased rainfall could cause increased umbrella sales, for example.

This is by no means a trivial concept. Practical assumptions about causality have been a catalyst for connecting the dots in science, engineering, and medical research for thousands of years. But, like eating your ice cream in the sea, it can sometimes be dangerous and lead to incorrect and potentially harmful conclusions. Although the above examples are silly, correlation is very often mistaken for causation in ways that are not immediately obvious in the real world.

Take the infamous fallacy ‘vaccines cause autism’ that quickly gained publicity and led to the campaign dubbed as ‘The Vaccine War’ by anti-vaccine advocates. The number of vaccines given to young children has risen substantially over the past two decades. During the same period, the number of children diagnosed with autism has increased considerably. This observation led to many frenzied parents choosing not to vaccinate their children against harmful diseases like measles, mumps, rubella, and polio. The concept was splashed across the media and unmoderated internet sources. Despite this observed correlation, there exist many possible explanations for this exponential increase in autism diagnoses, including cultural progression, improved screening methods, better awareness, and redefinition of the autistic spectrum.

In order to establish cause-and-effect, we need to go beyond observations and gather separate evidence through carefully planned experiments. The field of experimental design is an area of statistics which helps researchers to plan and interpret experiments. The design depends on what variables you wish to control for and which mechanisms you want to test. The gold standard for uncovering cause-and-effect relationships is a double-blind randomised controlled trial (RCT). These trials are thought to provide the highest form of evidence for causation and their results are frequently used to guide experts advice on what to eat, how to teach, which medical treatment to choose, whether to worry about pesticides and so on.

In an RCT, all subjects are under exactly the same conditions, except they are randomly allocated to either the group receiving the variable under question or to a control group. This allows the researcher (who does not know which group the subjects have been assigned to) to study the direct effects of the variable of interest, whilst all other variables remain the same.

But even interpretation of meticulous RCTs comes with a warning label. A 2013 study investigating the long suspected health benefits of a Mediterranean diet, concluded that eating this diet supplemented with olive oil caused a 30 percent lower risk in heart attacks, stroke, and death from cardiovascular disease compared with a low-fat diet 2. However, when the study design was reviewed, this landmark RCT study was retracted on the basis of methodological misconduct. The study was supposed to randomly assign participants to either: a Mediterranean diet with a minimum of four extra tablespoons of olive oil a day, the same diet but with at least an ounce of mixed nuts, or a low-fat diet. But it was found that of the approximately 7,500 participants in the study, 14% had not actually been randomly assigned. Instead, many married couples were assigned to the same group on the basis of convenience. In one particularly troubling case, a field researcher decided to assign an entire village to a single group, because some residents were complaining that their neighbours were getting free olive oil. The end result is that the study’s overall findings are still accurate in one sense: there is a correlation between the Mediterranean diet and better health outcomes. But in another sense, the paper was entirely wrong: the Mediterranean diet cannot be said to cause better health outcomes.

Another issue exists in the correlation vs. causation debate. Even with the best set out trial, in order to rule out all possible explanations and interactions, the required number of experimental subjects can become astronomical and it is often not practical or possible to rule everything out. And even with enough data, since the interpretations are based on statistics, there is always a chance, however slim, that your results are simply by chance. So in reality, there is no way to definitively claim causation. But if we were to be too sceptical of the logical reasoning formed by the well-trained minds of expert researchers, we might be careful not to halt progress altogether. If someone suggested that DNA wasn’t a major cause of inherited traits because we can’t truly prove causality, we’d be pretty stumped! The same goes for questioning whether smoking causes lung cancer, massive blood loss causes death and a number of other scientifically-supported causal relationships. So, even though causation always comes with a bit of uncertainty, science usually (with the odd exception) does a pretty good job of discovering causal relationships that make sense and work in day to day life.

To really understand and interpret study results, great care has to be taken to understand exactly what the data implies, and more importantly, what it doesn’t imply. Unfortunately, analysing research methods, statistics, probabilities, and risks is not an everyday skill set wired into human intuition, and it is all too easy to be led astray, even for seasoned researchers. In an era of ‘fake news’ and flashy media headlines, in which abstract findings are taken at face value, we need to cast an ever more critical eye on claims and look deeper into the underlying data and experimental design. We must constantly resist the temptation to see meaning in chance and to confuse correlation and causation in this new post-truth world.

During research for this article, I came across probably my favourite causation conspiracy – that as the number of pirates in the world has decreased over the past 130 years, global warming has gotten steadily worse 3. Clearly, if you truly want to stop global warming, the most impactful thing to do is to become a pirate. Perhaps this is the study that slipped by Donald Trump?

This article was specialist edited by Katrina Wesencraft and copy-edited by Kirstin Leslie.

12 Responses

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] about sharks as you order your ice cream cone? Shark attacks increase alongside ice cream sales, but one does not cause the other. The real variable is summertime weather, which drives us to beaches and ice cream […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]

[…] Shark Attacks, Ice Creams, and the Randomised Trial […]